Five tricks you should know about Azure Machine Learning Service

Azure Machine Learning Service gathers a lot of essential tools to build a real end-to-end Machine Learning project : from data sources to predictive web services, including versioning (code + model), scalability (compute resources) and monitoring.

In this post, we will discover five “secret” features of Azure Machine Learning. Let’s go to discover them !

1-Generate a perfect score.py script

Automated ML can help you quickly to get a model. Without any code, you can deploy one of the models. — Just wait a minute, will you really choose the best one ? As we know, the “ensemble” model is always the best but also the most complicated and less interpretable. — However, you will need to give a scoring script into the deployment wizard.

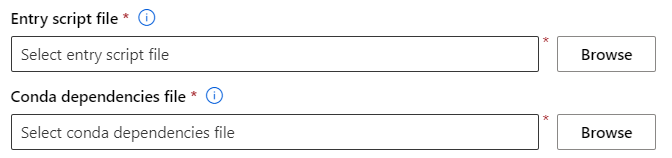

Wait, you were expecting a fully no code experience, is that right ? Now, have a look at the “Outputs + logs” of the child run of the model.

You will find the expected scoring script and the related environment definition in a Conda YAML file. Do help yourself and download locally these two files and upload them when the wizard asks for !

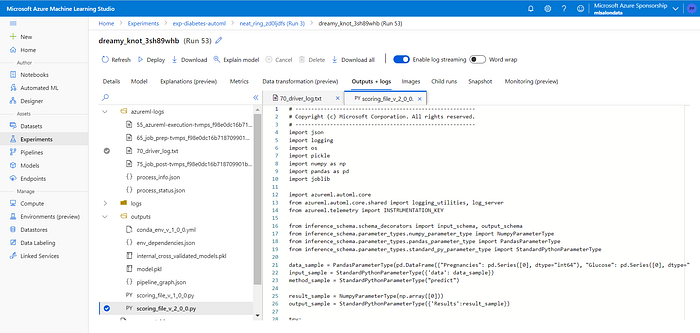

2-Rotate your Container Registry keys

We all know that Azure Machine Learning stores a lot of Docker images in the Container Registry resource (see also tip #5). The admin user must be activated and two passwords are automatically generated. For some security reasons, you may want to regenerate these passwords.

But Azure ML won’t pull any Docker images anymore ! You have to resync the keys with CLI az ml. First of all, you have to connect with the command az login or use the cloud shell in your Azure portal, which is auto connected.

Check az ml version, you should use the 2.0.1a6 version (or upper) and then, run the following command :

az ml workspace sync-keys -w <your_workspace> -g <your_resource_group>

3-Code local, execute remote

On a compute instance (dedicated virtual machine), you run the Jupyter server to execute notebooks in a Python kernel, including the azureml-sdk package. It all sounds too easy, right ? Be careful, when the compute instance is running, cost is running too… Why not use your own laptop ? You can install the Python SDK, preferably on a virtual env. Force the version number of the package to stabilise the environment.

pip install -U azureml-sdk==1.37.0

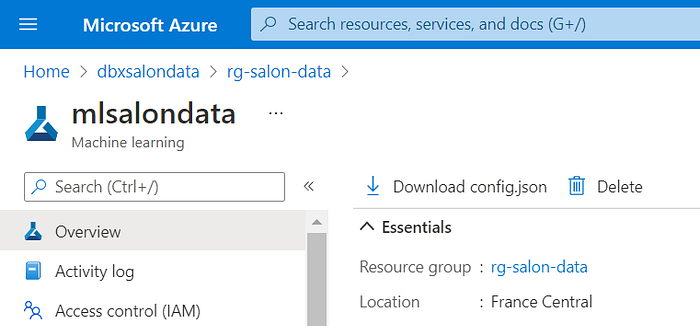

Download the config.json file from Azure portal and put it in your local folder.

To interact with your Azure ML Service, you will have to authenticate yourself in an interactive way (login and password in a web browser) or with a service principal.

“You have logged into Microsoft Azure ML Service!”

You will be able to register datasets or models and deploy web services on Azure, without running a compute instance. Moreover, it will be easy to use git commands with Visual Studio Code interface.

Download this sample of code from GitHub.

4-Link Databricks to Azure ML

Azure Databricks is perhaps the perfect toolbox to work with a big amount of data stored in a Data Lake : notebooks, distributed calculations, integrated Open Source tools like Delta or MLFlow… But you may want to keep the management of web services in the Azure ML portal or use the drift detection feature. It’s not an exclusive choice and you can use both. If we can work from a local machine (see previous tip), we can also work from a Databricks cluster and interact with Azure ML.

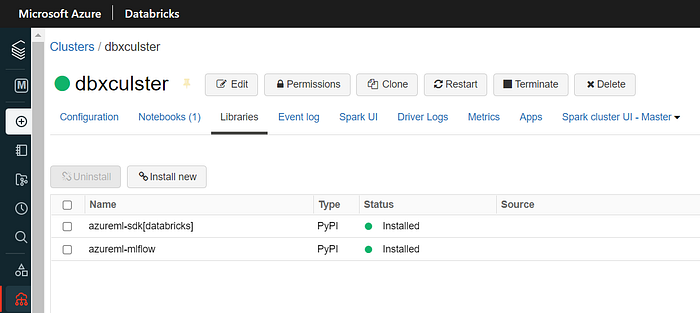

First of all, install the azureml-sdk[databricks] and azureml-mlflow packages on your cluster.

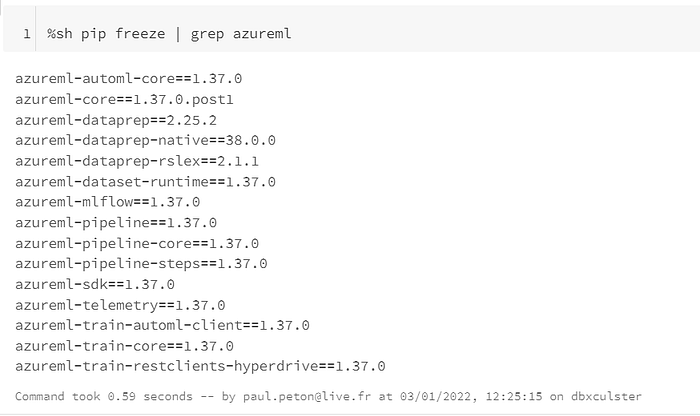

We can quickly check that all dependencies are now installed.

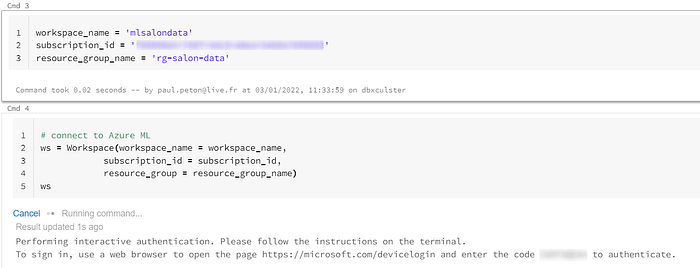

An interactive authentication is needed and you will be connected until the cluster restarts.

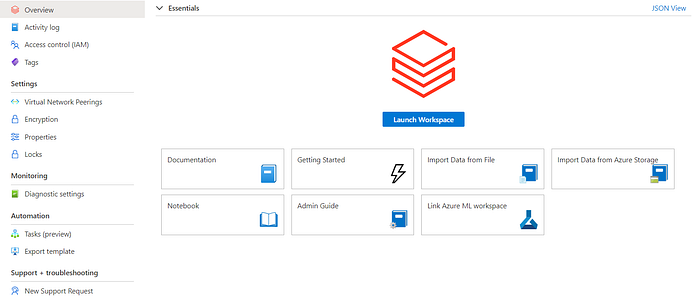

Let’s do some configuration in the Azure portal. Click on the “Link Azure ML workspace” button, from the Databricks resource overview.

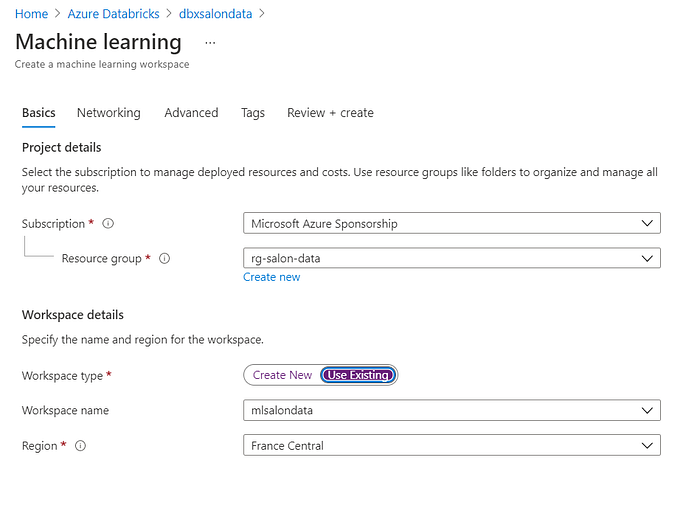

Some information are needed to identify the Azure Machine Learning workspace.

According to this discussion: “Once you have linked with this experience, you don’t need to run the following ws.write_config(), Workspace.from_config(), and mlflow.set_tracking_uri().” We launch a notebook in Databricks with some codes to set the tracking URI and experiment name.

Download the notebook from GitHub.

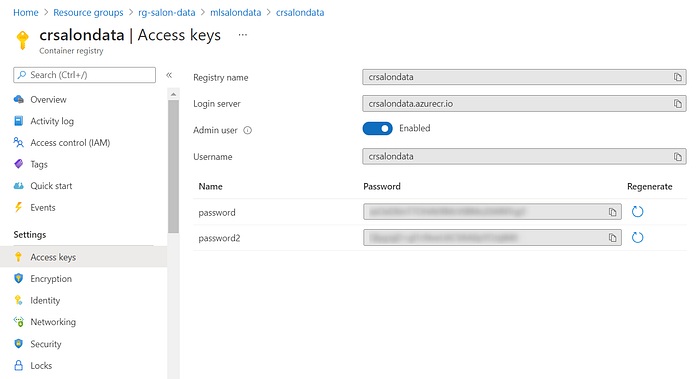

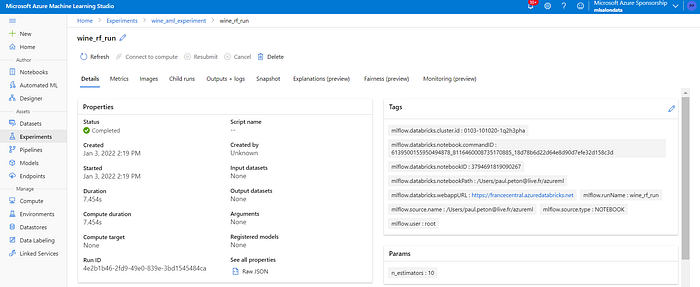

When we go back to Azure ML Studio, we find an experiment with a run and all the logs are now in the workspace.

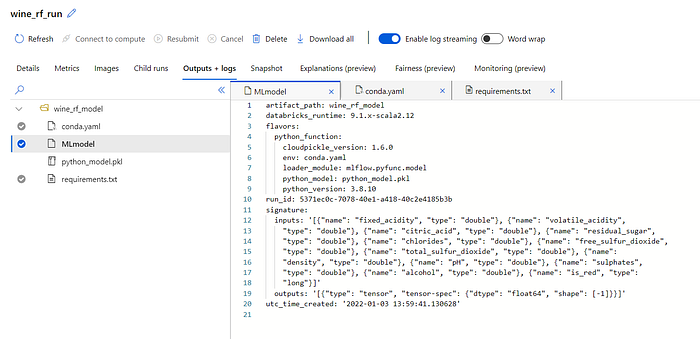

In the “Outputs + logs” menu, we find all the files needed to deploy the model as a predictive web service.

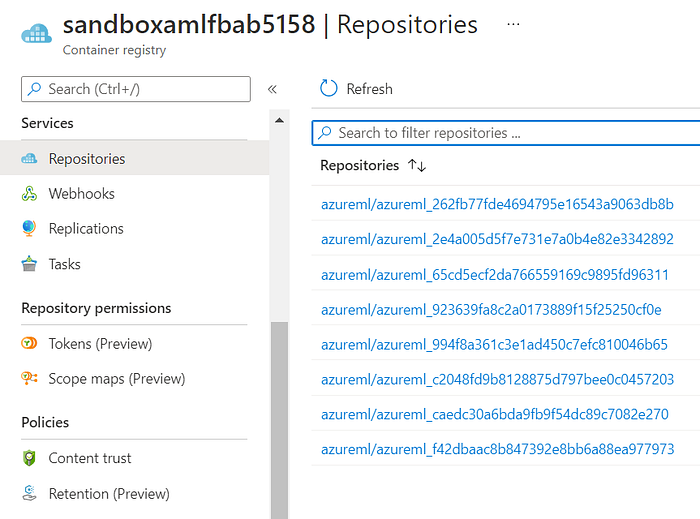

5-Clean your Container Registry periodically

What a mess ! You forgot that a container registry was created, linked to the Azure ML service. And a lot of images are now stored in. You exceeded the quota included in the pricing tier and all of the above costs a lot of money !

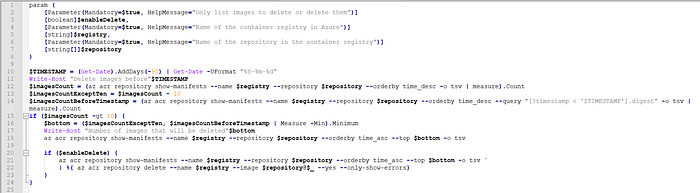

We are going to schedule a cleaning script to delete old images.

Download the script from GitHub.

This script lists down or deletes the Docker images older than 90 days but keeps a minimum of 10 images even when they are too old.

You can now deploy this script in an Azure Function and schedule it, for example, in an Azure Data Factory pipeline. Finally, add the #finops keyword on your curriculum !